Adversarial Machine Learning: A New Frontier

July 18, 2022

Introduction

Over the last decade, Machine Learning (ML) has become increasingly more commonplace, transcending the digital world into that of the physical. While some technologies are practically synonymous with ML (like home voice assistants and self-driving cars), it isn’t always as noticeable when big buzzwords and flashy marketing jargon haven’t been used. Here is a non-exhaustive list of common machine learning use cases:

- Recommendation algorithms for streaming services and social networks

- Facial recognition/biometrics such as device unlocking

- Targeted ads tailored to specific demographics

- Anti-malware & anti-spam security solutions

- Automated customer support agents and chatbots

- Manufacturing, quality control, and warehouse logistics

- Bank loan, mortgage, or insurance application approval

- Financial fraud detection

- Medical diagnosis

- And many more!

Pretty incredible, right? But it’s not just Fortune 500 companies or sprawling multinationals using ML to perform critical business functions. With the ease of access to vast amounts of data, open-source libraries, and readily-available learning material, ML has been brought firmly into the hands of the people.

It's a game of give and take

Libraries such as SciKit, Numpy, TensorFlow, PyTorch, and CreateML have made it easier than ever to create ML models that solve complex problems, including tasks that only a few years ago could have been done solely by humans - and many, at that. Creating and implementing a model is now so frictionless that you can go from zero to hero in hours. However, as with most sprawling software ecosystems, as the barrier for entry lowers, the barrier to secure it rises.

As is often the case with significant technological advancements, we create, design, and build in a flurry, then gradually realize how the technology can be misused, abused, or attacked. With how easily ML can be harnessed and the depth to which the technology has been woven into our lives, we have to ask ourselves a few tricky questions:

- Could someone attack, disrupt or manipulate critical ML models?

- What are the potential consequences of an attack on an ML model?

- Are there any security controls in place to protect against attack?

And perhaps most crucially:

- Could you tell if you were under attack?

Depending on the criticality of the model and how an adversary could attack it, the consequences of an attack can range from unpleasant to catastrophic. As we increasingly rely on ML-powered solutions, the attacks against ML models - known broadly as adversarial machine learning (AML) - are becoming more pervasive now than ever.

What is an Adversarial Machine Learning attack?

An adversarial machine learning attack can take many forms, from a single pixel placed within an image to produce a wrong classification to manipulating a stock trading model through data poisoning or inference for financial gain. Adversarial ML attacks do not resemble your typical malware infection. At least, not yet - we’ll explore this later!

Adversarial ML is a relatively new, cutting-edge frontier of cybersecurity that is still primarily in its infancy. Research into novel attacks that produce erroneous behavior in models and can steal intellectual property is only on the rise. An article on the technology news site VentureBeat states that in 2014 there were zero papers regarding adversarial ML on the research sharing repository Arxiv.org. As of 2020, they record this number as an approximate 1,100. Today, there are over 2,000.

The recently formed MITRE - ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems), made by the creators of MITRE ATT&CK, documents several case studies of adversarial attacks on ML production systems, none of which have been performed in controlled settings. It's worth noting that there is no regulatory requirement to disclose adversarial ML attacks at the time of writing, meaning that the actual number, while almost certainly higher, may remain a mystery. A publication that deserves an honorable mention is the 2019 draft of ‘A Taxonomy and Terminology of Adversarial Machine Learning’ by the National Institute of Standards and Technology (NIST). The content of which has proven invaluable in so far as to create a common language and conceptual framework to help define the adversarial machine learning problem space.

It's not just the algorithm

Since its inception, AML research has primarily focused on model/algorithm-centric attacks such as data poisoning, inference, and evasion - to name but a few. However, the attack surface has become even wider still. Instead of targeting the underlying algorithm, attackers are instead choosing to target how models are stored on disk, in-memory, and how they’re deployed and distributed. While ML is often touted as a transcendent technology that could almost be beyond the reach of us mere mortals, it’s still bound by the same constraints as any other piece of software, meaning many similar vulnerabilities can be found and exploited. However, these are often outside the purview of existing security solutions, such as anti-virus and EDR.

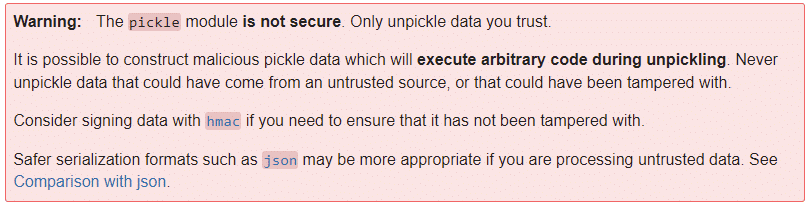

To illustrate this point, we need not look any further than the insecurity and abuse of the Pickle file format. For the uninitiated, Pickle is a serialized storage format which has become almost ubiquitous with the storage and sharing of pre-trained machine learning models. Researchers from TrailOfBits show how the format can execute malicious code as soon as a model is loaded using their open source tool called ‘Fickling’. This significant insecurity has been acknowledged since at least 2011, as per the Pickle documentation:

Considering that this has been a known issue for over a decade, coupled with the continued use and ubiquity of this serialization format, it makes the thought of an adversarial pickle a scary one.

Cost and consequence

The widespread adoption of ML, combined with the increasing level of responsibility and trust, dramatically increases the potential attack surface for adversarial attacks and possible consequences. Businesses across every vertical depend on machine learning for their critical business functions, which has led the machine learning market to an approximate valuation of over $100 billion, with estimates of up to multiple trillion by the year 2030. These figures represent an ever enticing target for cybercriminals and espionage alike.

The implications of an adversarial attack vary depending on the application of the model. For example, a model that classifies types of iris flowers will have a different threat model than a model that predicts heart disease based on a series of historical indicators. However, even with models that don't have a significant risk of ‘going wrong’, the model(s) you deploy may be your company's crown jewels. That same iris flower classifier may be your competitive advantage in the market. If it was to be stolen, you risk losing your IP and your advantage along with it. While not a fully comprehensive breakdown, the following image helps to paint a picture of the potential ramifications of an adversarial attack on an ML model:

But why now?

We've all seen news articles warning of impending doom caused by machine learning and artificial intelligence. It's easy to get lost in fear-mongering and can prove difficult to separate the alarmist from the pragmatist. Even reading this article, it’s easy to look on with skepticism. But we're not talking about the potential consequences of ‘the singularity’ here - HAL, Skynet, or the Cylons chasing a particular Battlestar will all agree that we're not quite there yet. We are talking about ensuring that security is taken into active consideration in the development, deployment, and execution of ML models, especially given the level of trust placed upon them.

Just as ML transitioned from a field of conceptual research into a widely accessible and established sector, it is now transitioning into a new phase, one where security must be a major focal point.

Conclusions

Machine learning has reached another evolutionary inflection point, where it has become more accessible than ever and no longer requires an advanced background in hard data science/statistics. As ML models become easier to deploy, use, and more commonplace within our programming toolkit, there is more room for security oversights and vulnerabilities to be introduced.

As a result, AML attacks are becoming steadily more prevalent. The amount of academic and industry research in this area has been increasing, with more attacks choosing not to focus on the model itself but on how it is deployed and implemented. Such attacks are a rising threat that has largely gone under the radar.

Even though AML is at the cutting edge of modern cybersecurity and may not yet be as household a name as your neighborhood ransomware group, we have to ask the question: when is the best time to defend yourself from an attack, before or after it’s happened?

About HiddenLayer

HiddenLayer helps enterprises safeguard the machine learning models behind their most important products with a comprehensive security platform. Only HiddenLayer offers turnkey AI/ML security that does not add unnecessary complexity to models and does not require access to raw data and algorithms. Founded in March of 2022 by experienced security and ML professionals, HiddenLayer is based in Austin, Texas, and is backed by cybersecurity investment specialist firm Ten Eleven Ventures. For more information, visit www.hiddenlayer.com and follow us on LinkedIn or Twitter.

Related Research

Exploring the Security Risks of AI Assistants like OpenClaw

OpenClaw (formerly Moltbot and ClawdBot) is a viral, open-source autonomous AI assistant designed to execute complex digital tasks, such as managing calendars, automating web browsing, and running system commands, directly from a user's local hardware. Released in late 2025 by developer Peter Steinberger, it rapidly gained over 100,000 GitHub stars, becoming one of the fastest-growing open-source projects in history. While it offers powerful "24/7 personal assistant" capabilities through integrations with platforms like WhatsApp and Telegram, it has faced significant scrutiny for security vulnerabilities, including exposed user dashboards and a susceptibility to prompt injection attacks that can lead to arbitrary code execution, credential theft and data exfiltration, account hijacking, persistent backdoors via local memory, and system sabotage.

Stay Ahead of AI Security Risks

Get research-driven insights, emerging threat analysis, and practical guidance on securing AI systems—delivered to your inbox.